Ask Trevor: how Argos' shopping agent works, and how brands should optimise for it

Last Updated:

Argos has quietly shipped one of the more interesting shopping agents in UK retail. Ask Trevor sits inside the Argos site, an LLM-powered shopping assistant designed to answer conversational queries and return a shortlist of products, prices, ratings, and a written justification for each pick.

For shoppers, it feels like talking to a knowledgeable assistant rather than filtering a category page. For brands selling through Argos, it represents a new and largely opaque ranking surface that decides which products get recommended and which get ignored.

This post breaks down how Ask Trevor appears to work under the hood, what its biggest ranking factors look like, and what brands can do to improve their odds of being picked.

What Ask Trevor actually is

Based on our analysis and testing, Trevor is not simply a frontend search experience with a chat skin on top. The frontend is a thin shell. The real system is a server-side conversational product discovery layer that takes a natural language query, turns it into a retrieval task against the Argos catalogue, and then uses an LLM to choose, justify, and present a shortlist back to the user.

The two-stage architecture

Based on how Trevor responds, the system almost certainly works in two stages.

Stage one is retrieval. When the user sends a query, the backend infers a product category, expands the query into structured attributes (size range, price band, key features), and pulls a shortlist of candidate products from the Argos catalogue. This stage is governed by hard signals: in-stock status, ratings, sales velocity, price, attribute match, and probably some commercial weighting.

Stage two is the LLM layer. Once a shortlist exists, the language model picks the best candidate from that list, maps product attributes to user-relevant benefits, and writes a short, structured justification. The LLM is doing reasoning and explanation, not search.

The product_ids field in the payload response is also revealing. When it is empty, Trevor is in open-ended discovery mode and the backend has to find products from scratch. When it is populated, Trevor is in contextual assistance mode, where the user is already looking at a product or a small selection and wants advice about those specific items. The same chat interface supports both jobs.

What the LLM is actually doing

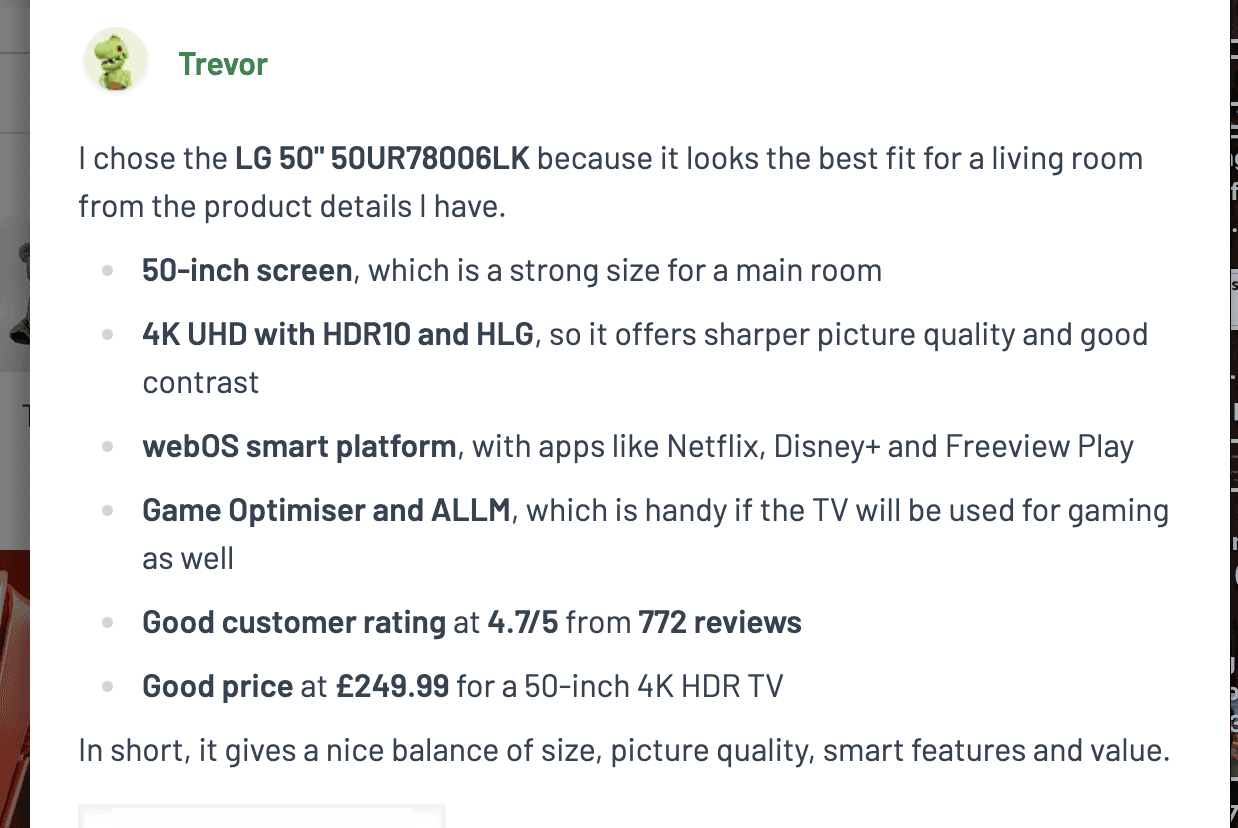

Take a typical Trevor response:

"I chose the LG 50" 50UR78006LK because it looks the best fit for a living room... 50-inch screen gives you a strong size for a main room, 4K UHD for a sharper picture, webOS for apps like Netflix, and Game Optimiser if you want to plug in a console."

Pull that apart and you can see four distinct things the model is doing.

First, selection. The phrase "I chose" implies a shortlist already exists and the model is ranking within it.

Second, feature to benefit mapping. Raw catalogue attributes like "50-inch", "HDR10", or "webOS" get translated into shopper-relevant benefits like "strong size for a living room", "better contrast", or "access to apps like Netflix". This is where the LLM adds genuine value. A traditional PLP shows specs. Trevor explains why those specs matter for your specific use case.

Third, context inference. The user said "living room". The model inferred that they probably care about screen size, picture quality, smart features, and value. None of that was stated. The model filled in the implicit intent.

Fourth, synthesis. Phrases like "nice balance of size, picture quality, smart features and value" are not coming from a database. The LLM is compressing multiple signals into a single human-readable judgement.

The model is not discovering products. The model is discovering meaning in products. That distinction matters when you start thinking about how to influence it.

The biggest ranking factors

Trevor's ranking is hybrid. The backend does most of the heavy lifting on hard signals, then the LLM re-ranks for context, balance, and explainability. The factors below are listed in roughly the order they seem to matter.

Backend factors (the ones that decide eligibility)

Category and attribute relevance. The query has to map cleanly to a product category, and the products in that category have to match the inferred attributes. Get this wrong and nothing else matters, because your product never enters the shortlist.

Ratings and review volume. A 4.7 from 772 reviews is not decorative. It is a confidence signal that almost certainly feeds into the ranker. Products with high ratings and meaningful review counts have a structural advantage. Products with no reviews or low ratings are unlikely to surface no matter how well-priced they are.

Price-to-value positioning. Trevor rarely picks the cheapest option and rarely picks the most expensive. It tends to pick something positioned as good value within the range, suggesting the system optimises for a quality-over-price ratio rather than raw price.

Availability and commercial signals. In-stock, deliverable, not discontinued, and probably some weighting for margin or active promotion. Standard retail logic.

Attribute completeness. Products with richer, more complete metadata are easier to match against inferred attributes. A TV listing missing screen size, resolution, or smart OS is much harder to rank for "best tv for living room" because the system cannot confidently answer the inferred filters.

LLM factors (the ones that decide which eligible product wins)

Contextual fit. Once the shortlist exists, the model picks the product that best matches the inferred context. "Living room" pushes toward larger sizes and mainstream brands. "Small flat" or "bedroom" pushes the opposite way. The same backend shortlist can produce different winners depending on the framing.

All-roundness. Trevor consistently uses phrases like "strongest all-round pick". The model favours products that do reasonably well across multiple dimensions over products that are best on one dimension and weak on others. A TV that wins on price but loses on smart features is less likely to be chosen than a TV that is solid on everything.

Explainability. This one is underrated. The LLM prefers products it can justify cleanly in four to six bullets. Products with clear, easily understood features (webOS, Game Optimiser, HDR10) build a better narrative than products with obscure or poorly named specs. If the model cannot easily explain why your product is good, it will quietly pick the one it can explain.

Narrative coherence. Related to explainability. The model wants to write a confident, balanced recommendation. Products that fit a clean story (good for the use case, good value, well-rated, recognisable brand) get chosen over products that require caveats.

What does not seem to matter much

Trevor does not appear to be doing user-level personalisation. There is no user profile, browsing history, or preference data in the request payload. The personalisation is query-level, driven by what the shopper says in this specific message.

It also does not look like a pure semantic vector search. The retrieval feels structured and rules-based, not embedding-driven. That has implications for how brands optimise: keyword and attribute hygiene matters more than writing prose that "sounds like" what shoppers ask.

How brands should optimise for Trevor

1. Get into the eligible shortlist first

Nothing else matters if your product never makes it past the retrieval stage. That means treating Argos' product taxonomy and attribute fields with the same seriousness you would treat Amazon backend attributes or Walmart item type keywords.

Make sure every structured attribute is filled in: dimensions, weight, materials, compatibility, key features, intended use. If your TV listing is missing the smart OS field, you will not surface for queries that infer "smart features matter". If your camping chair is missing weight capacity, you will lose queries about heavier users. Empty fields are silent disqualifications.

2. Earn ratings and reviews

This is the single biggest lever a brand can pull that Trevor visibly responds to. Products with high star ratings and meaningful review volumes appear to get a structural boost. Investing in post-purchase review collection, addressing negative reviews quickly, and prioritising your best-rated SKUs in your Argos range plan all compound here.

A product with a 4.7 average from 700+ reviews is essentially pre-qualified for Trevor's shortlist. A product with a 4.1 from 30 reviews is fighting uphill no matter how good the listing copy is.

3. Write listing copy that names benefits, not just specs

The LLM layer rewards explainability. If your title and bullets say "HDR10, webOS 23, 50-inch 4K UHD with Game Optimiser", the model has the raw material to translate that into "great picture quality, easy access to apps, strong size for a living room, good for gaming". If your title says "ULTRA-PREMIUM SMART DISPLAY EXPERIENCE", the model has nothing to work with and will quietly prefer a competitor whose spec sheet is clearer.

Use plain, descriptive language. Name the feature, then immediately name the use case it supports. This is the same instinct that works for Amazon Rufus and Walmart Sparky, and for the same underlying reason: generative shopping agents need to construct a coherent justification, and they will pick the product that gives them the cleanest one.

4. Cover the use cases shoppers actually ask about

Trevor infers context from phrases like "for the living room", "for gaming", "for a small flat", "for my dad". Listings that explicitly reference common use cases give the model an easier path to selecting them.

A practical exercise: list the top ten phrases a shopper might use when asking Trevor for a product in your category. "Best tv for living room", "best tv for bright room", "best tv for football", "best tv for bedroom", and so on. For each phrase, ask whether your listing copy and attributes give the model enough to pick you. If the answer is no, that is your content roadmap.

5. Price for value perception, not for the floor

Trevor does not chase the cheapest product, but it does seem to penalise products that are visibly bad value within their range. A product priced at the top of its category needs justifying features to match. A product priced at the bottom needs to not look like the bargain-bin option. The sweet spot tends to be a competitive mid-range price paired with strong specs and ratings, which is exactly what the LG TV in the original example was.

6. Track which products win and reverse-engineer

Trevor is a black box, but you can probe it. Run the same five or ten queries every month for your category. Note which products appear, which features the model emphasises, and how the answers change when you tweak the query (adding "for gaming", "under £300", "for a small space"). Over time you will build a working model of which signals matter most in your category, which is far more useful than guessing from the outside.

➡️ If you want a more immediate understanding of your visibility in Ask Trevor or how to optimise your listings for it, get in touch with Azoma for a demo of our Retailer AI intelligence platform.

The bigger picture

Ask Trevor is another example of where retail discovery is heading. The traditional category page is being replaced by a conversation. The traditional ranking factors (keywords, price, position) are being supplemented by new ones (explainability, narrative fit, attribute completeness). The brands that adapt their listing strategy for this new layer will earn a disproportionate share of the recommendations being made by retailers' own AI shopping agents.

The thing to internalise is that Trevor and its peers are not search engines with a chat interface. They are recommendation engines that have to defend their picks in writing. Make your products easy to defend, and you make them easy to pick.

➡️If you are looking to optimise your product listings for AI retailer assistants like Ask Trevor, Amazon Rufus or Walmart Sparky, get in touch with Azoma for a demo today.

Article Author: Max Sinclair