Amazon Rufus comes out of beta as Alexa for Shopping: What it means for Brands

Last Updated:

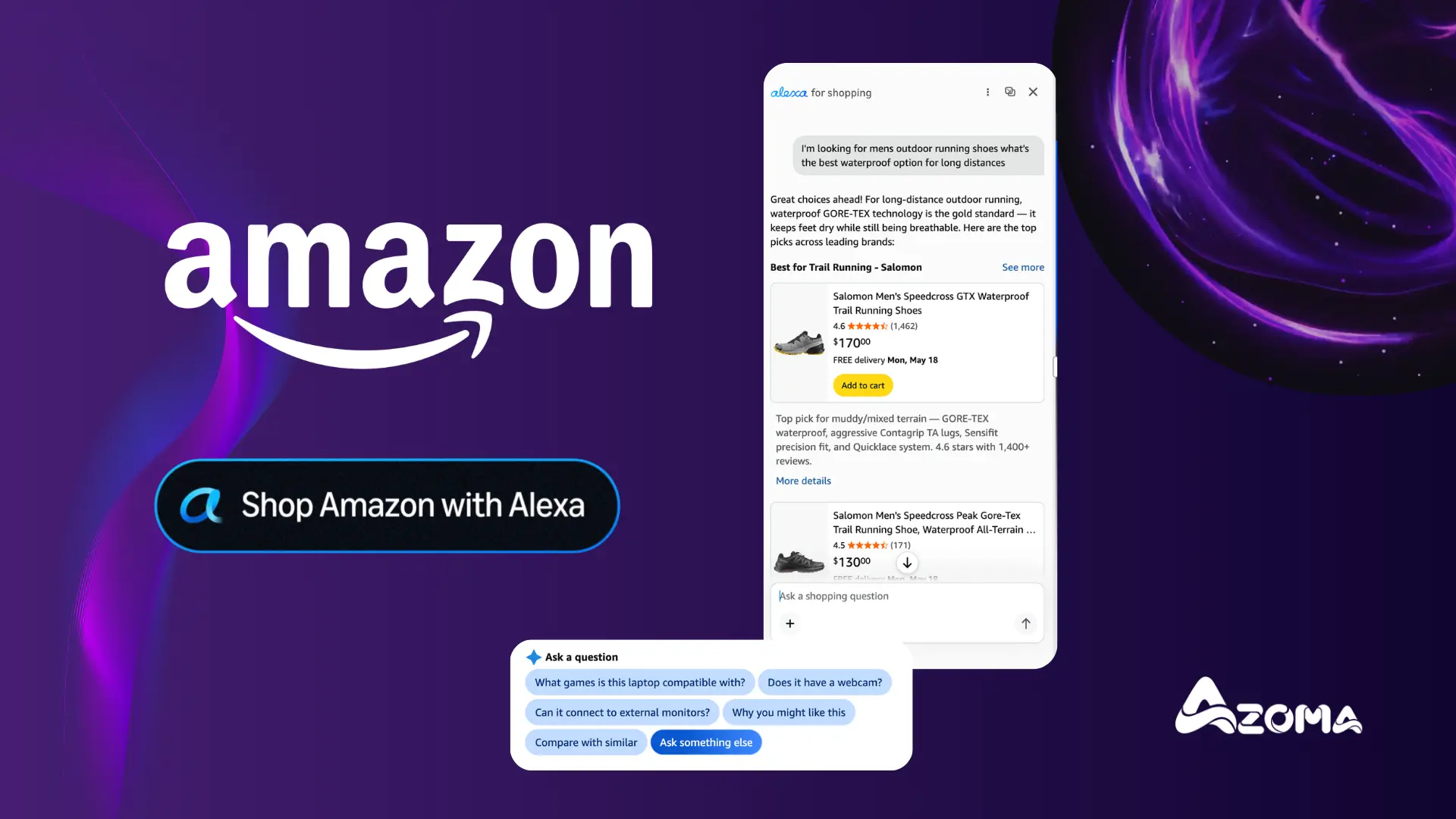

Amazon has merged Rufus, its conversational shopping agent, with Alexa to launch a brand new shopping agent: Alexa for Shopping. Rolling out to all U.S. customers over the coming week across the Amazon Shopping app, Amazon.com, and Echo Show, it has now replaced Rufus across the site.

This is not a cosmetic rebrand. It is the unification of Amazon's two most important AI surfaces, the household assistant and the on-site shopping agent, into a single, memory-sharing brain. And it tells us exactly where Amazon thinks commerce is going.

As Rajiv Mehta, VP of Conversational Shopping at Amazon, put it: "Alexa for Shopping is like having an expert personal shopper who already knows you and remembers your preferences, your past purchases, and your conversations, and carries that knowledge and understanding of you across your phone, laptop, and Echo devices. Whether you're comparing products, tracking a price drop, or continuing research you started yesterday, you don't have to start over."

Rufus was the proof point

The numbers behind this move are staggering. According to Amazon, Rufus was used by 300 million+ customers in 2025, delivered $12bn in incremental annualised sales, and shoppers using Rufus are 60% more likely to complete a purchase. By Black Friday 2025, Rufus had hit 38% adoption, and on that single day AI chatbots and agents drove $14.2 billion in global sales, including $3 billion in the US.

Rufus did not just validate conversational commerce. It proved that shoppers, given the choice, prefer conversing over searching.

One AI assistant, shared memory, every surface

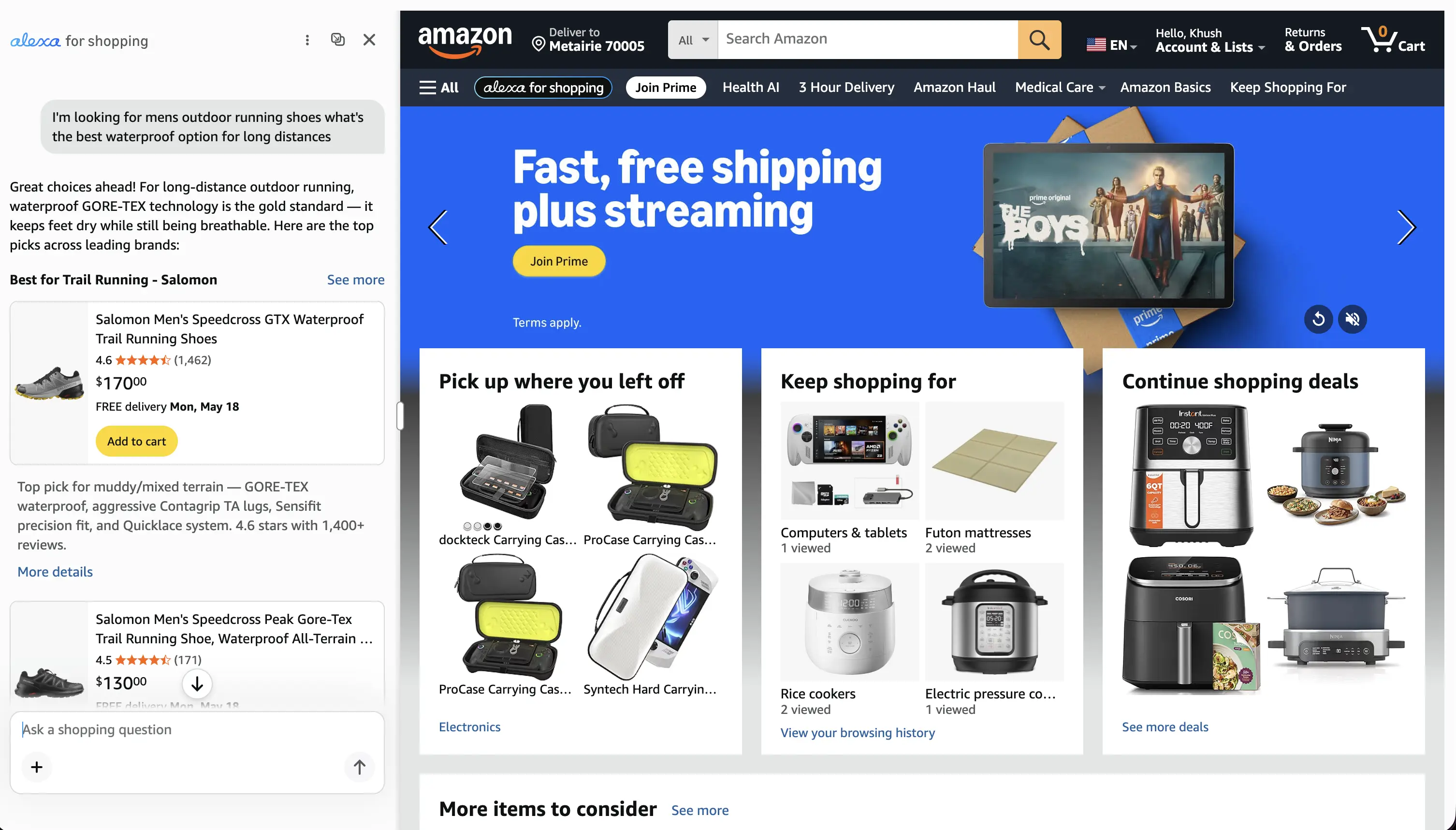

Amazon is now bringing Rufus and Alexa+ together to create one AI assistant with shared memory. As Amazon describes it: "What you share with Alexa on your Echo and other Alexa-enabled devices informs your shopping experience on Amazon, and your conversations, browsing, and purchases on Amazon make Alexa more helpful across all your experiences, including on Amazon.com. Your conversations and preferences flow in both directions, making Alexa for Shopping more personal and more helpful over time."

In practical terms, the assistant now remembers context across every Amazon surface: Echo devices, Echo Show, Fire TV, Fire tablets, the Alexa app, Alexa.com, the Amazon Shopping app, and Amazon.com. Preferences, interests, household details (family members, pets, dietary needs), past purchases, and conversations all follow you wherever you go.

This is Amazon's answer to the agentic commerce shift. Over the last 20 years, Amazon built the best website for ecommerce. Over the next 20, they intend to build the best AI shopping agent. And they are not bolting it on. They are rebuilding the front door.

This was always the plan: Jassy & Amazon's Patent

None of this is surprising given what Andy Jassy wrote in his 2025 letter to shareholders, or what Amazon's recent patent activity reveals about how long this fusion has been in the works.

With 600 million active Alexa endpoints across devices, cars, offices, Fire TV, and Prime Video, Jassy described how Amazon had to "completely rewire her brain, corresponding intelligence, breadth of knowledge, routing of services and APIs she accesses, and what routines and jobs she could do" to capitalise on generative AI.

The early results are extraordinary. Customers are now:

Talking to Alexa 2x as much (and for longer durations across a wider breadth of topics)

Completing purchases on devices 3x more often

Streaming music 25% more

Using smart home features 50% more

Jassy framed this as part of a broader philosophy he calls "going back to the starting line." When a transformative technology arrives, you have to reimagine the experience from a clean sheet of paper, even when the existing product is working at scale. Alexa for Shopping is exactly that. It is Amazon refusing to just "add a little AI to the existing experience" and instead rebuilding the retail interface from first principles.

If Jassy's letter is the strategic intent, Patent US 12141529 is the architectural footprint. Surfaced and analysed brilliantly by Andrew Bell (featured in Forbes by Kiri Masters), this recently granted Amazon patent gives a glimpse into the technological framework Amazon has been quietly building to combine Alexa's voice capabilities with Rufus-style product intelligence.

Important caveat: this patent is not the technical blueprint for Alexa for Shopping itself, and the live experience still looks and behaves largely like Rufus, with the same conversational interface, the same data sources, and COSMO doing the semantic heavy lifting underneath. What the patent shows is the direction of travel. Amazon has been putting the architectural pieces in place to merge these two systems for a while.

What's new with Alexa For Shopping

On the surface, the interface still looks largely the same as Rufus, with the Alexa logo replacing the familiar Rufus one. But under the hood, a slew of new capabilities have shipped.

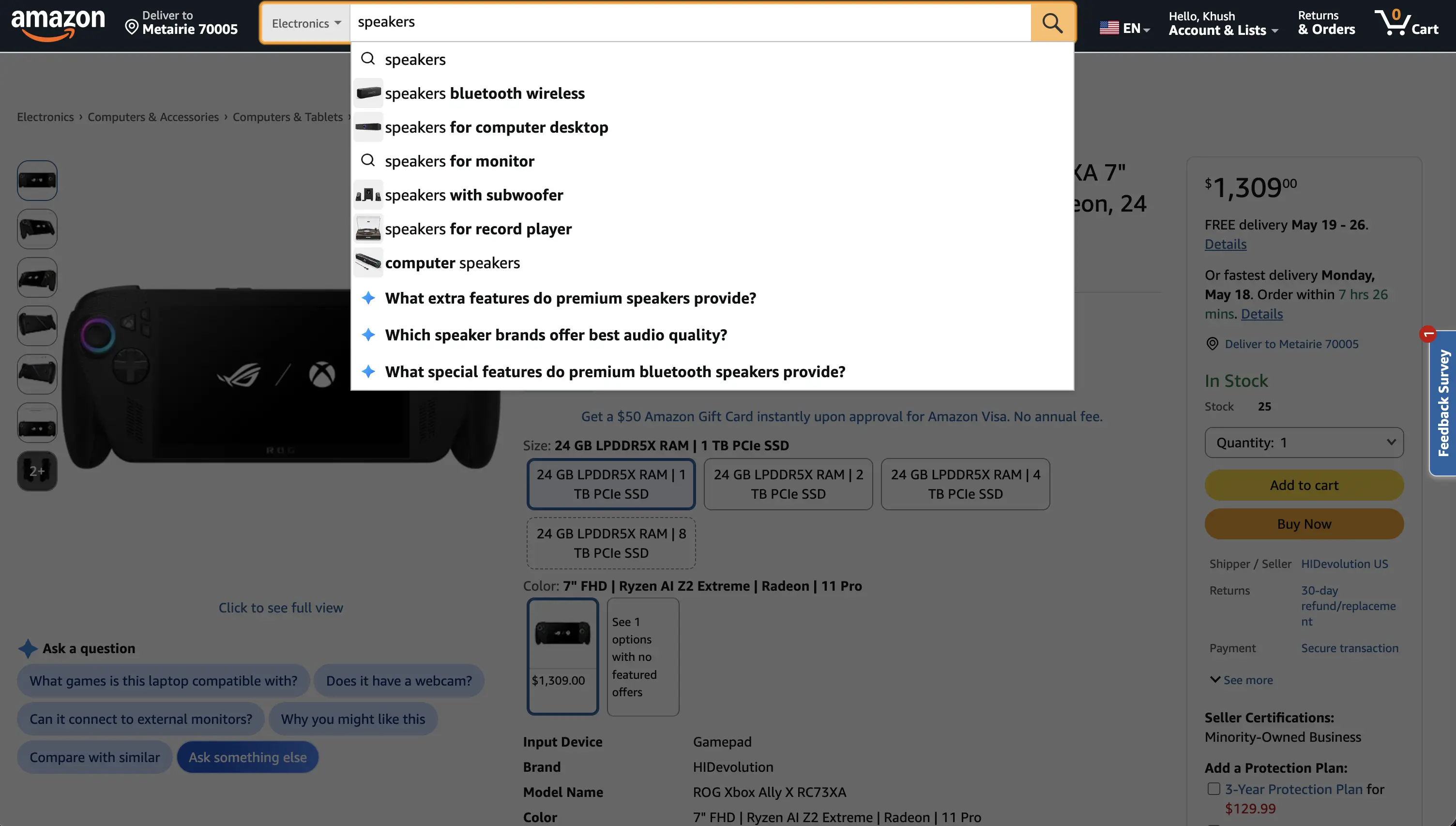

1. Questions in the main Amazon search bar.

This is the most consequential change. Shoppers no longer need to open a dedicated chat window. They can now ask Alexa for Shopping questions directly in the main Amazon search bar, and the experience recognises when the input is a question rather than a keyword string. That covers everything from broad top-of-funnel queries ("What's a good skincare routine for men?") to bottom-of-funnel comparisons ("Best face cream for dry skin").

In Amazon's own words from the FAQ: "if we determine that your search query is best answered by Alexa, we may take you to Alexa for Shopping and start a conversation."

The standard product listing page is no longer the default destination for every search.

2. AI overviews in search results and on PDPs.

Alexa for Shopping now surfaces AI-generated overviews at the top of search results in the Amazon Shopping app, giving shoppers a quick summary of the category and what to look for before they start browsing. The same overviews appear on product detail pages to help inform purchase decisions. Already available to millions and rolling out to all US shoppers.

3. Dynamic side-by-side product comparisons.

Shoppers can select multiple products directly from search results and Alexa for Shopping will compare them side by side on features, prices, and reviews. This is no longer a feature locked inside a chat. It is integrated into the core search experience.

4. Scheduled Actions: automated, conditional shopping.

Tap the "+" icon in the chat window and you can create a Scheduled Action.

Examples Amazon highlights include adding healthy kids' snacks to your cart every month, restocking pet food or paper towels on a schedule, getting alerts when a favourite author releases a new book, or conditional logic like "Add this sunscreen to my cart if the price drops to $10 and I haven't purchased it in the last 2 months."

Alexa handles the product research and either notifies you or adds items directly to your cart. This is the first proper agentic commerce surface on Amazon, where the assistant acts autonomously on your behalf based on rules you set.

6. Conversational cart-building from past orders.

Say "add my regular dog treats" or "add my frequently ordered cleaning products" or "add my favourite protein bars to my cart" and Alexa will pull from your purchase history and build the cart. Check out with a single tap.

7. A full year of price history.

Tap "Price History" on any PDP or ask Alexa directly to see how the price has changed over the past year on hundreds of millions of products. Combined with price alerts and Auto Buying, this turns Alexa for Shopping into a price-tracking agent that buys at your target price automatically.

8. Custom shopping guides for big purchases.

Whether you are buying a TV or exploring a brand new category, Alexa for Shopping can generate a custom shopping guide that compares features, prices, and reviews across Amazon and the web based on what matters most to you.

9. Personalisation you can inspect and edit.

You can ask Alexa for Shopping what it knows about you (family members, pets, interests, dietary needs) and update those details by simply asking. The personalisation layer is no longer a black box.

10. Voice shopping in the Amazon app.

Tap the mic icon in the chat window to use voice to shop with Alexa directly inside the Amazon app.

What are the implications for Amazon Sellers

The good news for brands and sellers already optimising for Rufus is that the playbook remains the same. In Amazon's own words from the FAQ:

"Alexa uses information from a variety of sources to provide answers to your shopping questions, including information from Amazon's product catalog, customer reviews, community Q&As, licensed content partners, and information from across the web."

This is almost identical to how Rufus worked. The same data sources, the same retrieval logic, the same emphasis on clear, structured, intent-rich PDP content. The COSMO knowledge graph still sits underneath, doing the semantic heavy lifting. Rufus optimisation levers (noun phrase usage, feature-to-benefit mapping, Q&A content, visual label tagging) all still apply.

What has changed is the personalisation layer sitting on top and the range of surfaces where your product is being evaluated. Alexa for Shopping does not just retrieve the most semantically relevant products for a query. It retrieves the most semantically relevant products for this specific shopper, given everything Alexa has ever learned about them, across the search bar, side-by-side comparisons, AI overviews on PDPs & scheduled actions.

Rich, context-laden PDPs are no longer just a ranking advantage. They are how your product gets matched to the right persona in the first place, and how it stays in consideration when the AI is comparing it head-to-head, summarising it in an overview, or auto-buying it on someone's behalf.

What sellers should do today to optimise for Alexa for Shopping

The foundations from the Rufus still apply. The bar has just been raised.

1. Get the COSMO and Rufus fundamentals right.

Structured titles built around clear noun phrases (product type + defining attribute + primary purpose). Bullets that explicitly map features to customer outcomes. Backend attributes filled out completely so the commonsense graph (used_for, used_by, used_in) can resolve intent correctly. Visual label tagging on imagery so OCR-readable callouts feed the semantic match. If you have not closed these gaps yet, do it now. Everything that follows builds on top.

2. Optimise for the new AI overview surface.

AI overviews now sit at the top of search results and on the PDP itself. The content Alexa pulls into those summaries is generated from your listing, your reviews, your Q&A, and external sources. If your bullets and A+ content do not clearly state what the product is, who it is for, and why it solves a problem, the AI will either gloss over you or fill the gap with a competitor's framing.

3. Win the head-to-head comparison.

With side-by-side product comparisons now native to the search experience, shoppers will increasingly see your product evaluated directly against competitors on features, price, and reviews. Make sure the comparable attributes are explicit and machine-readable, and that your differentiators are stated clearly in the copy, not buried in marketing language.

4. Understand and influence the off-page sources Alexa pulls from.

Alexa explicitly draws on "licensed content partners, and information from across the web." That means your product listing is no longer the only surface that matters. Reviews on third-party sites, retailer content, expert roundups, editorial coverage, and category guides all feed Alexa's answer. Brands need a coordinated view of where they show up off-Amazon, and a plan to be present and accurate in those sources.

➡️ Azoma's platform is built precisely for this, mapping the full set of sources AI shopping agents actually retrieve from and showing you where your brand is winning, losing, or missing entirely.

5. Treat rich, context-laden content as a competitive moat.

With personalisation now baked into every result, generic copy gets sorted last. The PDP needs to clearly express what the product is, who it is for, how it is used, where it is used, and what occasion or environment it suits. Ambiguity is penalised. Explicitness is rewarded. The brands that win will be the ones that help Alexa understand their products as clearly as a well-informed sales associate would.

6. Know your ideal customers and build personas around them.

This is now mission-critical. Because Alexa personalises responses against shopper profile data (family members, pets, dietary needs, past purchases, household context), you need to know exactly which personas you are trying to win and how each one phrases their needs.

➡️ Azoma's digital twin tech lets you track Alexa rank for hyper-specific personas, so you can see how a "new parent shopping for a baby monitor on a tight budget" sees your product versus a "tech-forward early adopter buying their second smart home device." If you cannot see what each persona sees, you cannot optimise for them.

7. Earn a place in Scheduled Actions and reorder flows.

Conversational cart-building ("add my regular dog treats") and Scheduled Actions favour brands that customers have already bought and trusted. Subscribe & Save, repeat-purchase categories, and consumables are now even more strategic. If your product is the one a shopper says "add my favourite" about, you have effectively locked in recurring revenue at the AI layer.

8. Treat scaled listing optimisation as a core priority, not a side project

With Alexa for Shopping now sitting inside the main search bar, surfacing AI overviews on every PDP, and routing more queries away from the standard PLP, every listing in your catalogue is an entry point for a conversation, not just a destination after a click.

You cannot manually rewrite thousands of ASINs for an agent-first world. Azoma optimises listings at scale across Amazon, Walmart, and Target, translating how AI shopping assistants actually reason into measurable PDP improvements.

👉 If you want an assessment of your PDP readiness for Alexa for Shopping and the agentic commerce era, get in touch with us today.

Summing Up

Alexa for Shopping is, in many ways, the experience Amazon has been building toward. A unified, memory-sharing, deeply personalised agent that follows the shopper across every Amazon surface. The conversational interface, the data sources, and the COSMO engine underneath are largely unchanged from Rufus, but the strategic intent and the technical foundation are both now on the record.

The Rufus era proved shoppers want to talk. Jassy's letter laid out why every customer experience will be reinvented by AI. Patent US 12141529 showed how Amazon would fuse voice, product intelligence, and personalisation into a single agent. Alexa for Shopping is the delivery of that vision.

What comes next is depth, not direction. Alexa will keep moving from answering questions to acting autonomously on behalf of the shopper, building carts, tracking prices, scheduling reorders, and increasingly buying directly from across the web through Buy for Me. The agent will do more of the journey, with less human input.

For sellers, the message is simple. The Rufus playbook still works, but the bar is now higher and the surfaces where your product is being evaluated have multiplied. The brands that win the next five years on Amazon will be the ones easiest for intelligent systems to trust, surface, compare, and act on, across every persona that matters to them.

The search bar is being replaced. The PDP is being summarised. The cart is being built for the shopper. If your listings are not ready for that world, every day you wait is a day a competitor's listing is teaching Alexa what to recommend instead.

Azoma is built for exactly this. We optimise listings at scale across Amazon, Walmart, and Target, and our digital twin tech lets you see how every persona that matters to your brand sees your product inside Alexa. Get in touch today for an assessment of your PDP readiness for Alexa for Shopping and the agentic commerce era.

Article Author: Max Sinclair